Where S2 contains the explained variances, and sigma2 contains the getcovariance ( ) ov components.T S2 components + sigma2 eye(nfeatures) To convert it to a. PCA is an unsupervised dimensionality reduction algorithm.

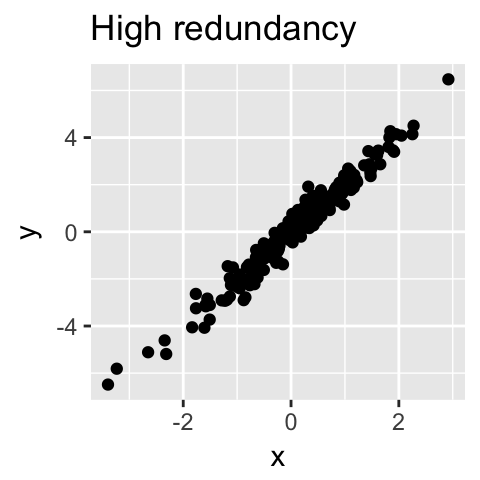

Where, the PCs: PC1, PC2.are independent of each other and the correlation amongst these derived features (PC1. Pca method for hyperimage getfeaturenamesout ( inputfeatures None ) Returns : cov array of shape(nfeatures, nfeatures)stimated covariance of data. Principal Component Analysis (PCA) is one of the most commonly used unsupervised machine learning algorithms across a variety of applications: exploratory data analysis, dimensionality reduction, information compression, data de-noising, and plenty more. It is a statistical process that converts the observations of correlated features into a set of linearly uncorrelated features with the help of orthogonal transformation. The vectors shown are the eigenvectors of the covariance matrix scaled by the square root of the corresponding eigenvalue, and shifted so their tails are at the mean. Now, the regression-based on PC, or referred to as Principal Component Regression has the following linear equation: Y W 1 PC 1 + W 2 PC 2 + + W 10 PC 10 +C. Principal Component Analysis is an unsupervised learning algorithm that is used for the dimensionality reduction in machine learning. PCA of a multivariate Gaussian distribution centered at (1,3) with a standard deviation of 3 in roughly the (0.866, 0.5) direction and of 1 in the orthogonal direction.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed